Firefly Protein Enables Visualization of Roots in Soil

Complete the form below to unlock access to ALL audio articles.

Plants form a vast network of below-ground roots that search soil for needed resources. The structure and function of this root network can be highly adapted to particular environments, such as desert soils where plants like Mesquite develop tap roots capable of digging 50 meters deep to capture precious water resources. Excavation of root systems reveals these kinds of adaptations but is laborious, time consuming, and does not provide information on how growing roots behave.

Despite their importance, roots remain one of the most mysterious parts of the plant. They cannot easily be studied since they grow hidden in soil. Most of what scientists know about roots today comes from either digging up roots, or growing them in transparent media that do not reflect their natural environment.

“To visualize the intricate growth patterns and functions of roots we needed to develop a different approach,” Dinneny explained. “We were very mindful that the method had to allow us to vary conditions, in order to present roots with different combinations of environmental conditions that simulate important stresses such as drought or low-fertility soil.”

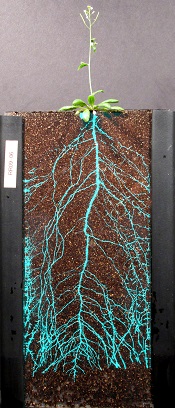

The team’s imaging system is called GLO-Roots (for Growth and Luminescence Observatory for Roots). It is an integrated platform for growing plants in soil in custom-built vessels, imaging roots using bioluminescence, and analyzing root growth, architecture and gene expression using software designed in collaboration with Guillaume Lobet at the University of Liège.

The GLO-Roots system involved the genetic engineering of plants to produce an enzyme from fireflies called luciferase, which causes them to glow in the dark of the soil. Light-sensitive cameras then detect the light emitted by the roots and allow researchers to discriminate between the roots and the surrounding soil. Using multiple genetically encoded luciferases, each one emitting light of a different wavelength, the team was able to simultaneously track whole root system architecture and the gene expression of adult plants.

“Roots grow through a pathfinding process, somewhat like neurons, and must make decisions regarding which direction to grow and when to branch. This is heavily influenced by soil quality and the location of water and nutrients. Our ability to track gene expression using GLO-Roots is a game changer that will enable an understanding of the molecular events enabling these root decisions,” explained Dinneny.

The Dinneny lab used GLO-Roots to study the response of roots to environmental stresses relevant to sustainable agriculture. Understanding how root architecture is altered by environmental conditions and stresses can teach scientists how plants adapt to their surroundings and also about the genetic and biochemical activities underlying these adaptive processes.

The team’s findings may be particularly important for understanding how plants survive during droughts. The GLO-Roots system enabled Dinneny and his team to track growth rate and direction of dozens of root tips simultaneously and relate this to the amount of water present in the soil surrounding the roots. Rubén Réllan-Álvarez, the lead post-doctoral scientist on the project and current assistant professor at Langebio, Cinvestav in Irapuato, México, found that simulated drought caused roots to growth deeper into the soil column, presumably enabling access to untapped water resources.

“This project combines two of Carnegie Science’s historical strengths: development of advanced imaging platforms and understanding of how plants acclimate to their environment. Our study shows that roots have several tricks that enable efficient recovery of water from their environment,” Réllan-Álvarez said.

The GLO-Roots system will enable the characterization of genetic pathways that enable efficient exploration of the soil environment, which may be relevant to establishing strategies for sustainable and drought-tolerant agriculture.