Using Supercomputers to Predict Dispersal of Chemicals and Pollutants

Complete the form below to unlock access to ALL audio articles.

Motivated by a deadly chemical attack in Syria, Kiran Bhaganagar, professor of mechanical engineering at the University of Texas at San Antonio and her team from Laboratory of Turbulence Sensing and Intelligence Systems are pursuing research that may help save lives during an airborne chemical attack.

During the April 4, 2017 Syrian chemical event, a plume of sarin gas spread more than 10 kilometers (about six miles), carried by wind turbulence, killing more than 80 people and injuring hundreds. The team used the Texas Advanced Computing Center (TACC) Stampede2 supercomputer and innovative computer simulation models to replicate the dispersion of the chemical gas in the Syrian event. Results were published in Natural Hazards.

“I was horrified by the attack and wanted to do something useful. The accuracy of our computer simulation of the chemical dispersion showed the ability to capture real world conditions despite a scarcity of information. Our team continues research in simulating air turbulence and dispersion of chemicals and pollutants. This work would not be possible without the power of supercomputers. In the Syria research, we got background information and added it our computer model. Our models captured the boundaries of the plume and the cities where it spread. We saw it was very similar to what was reported in the news. That gave us confidence that our system works and that we could use it as an evacuation tool. We have developed a coarser simulation model to help cut down the processing time and continue research using the coarser model for buoyant air turbulence modeling. Our goal is to cut simulation time of an event down, so it can be used by first responders to help them know areas to evacuate,” states Bhaganagar.

Air turbulence and how chemicals and pollution spread

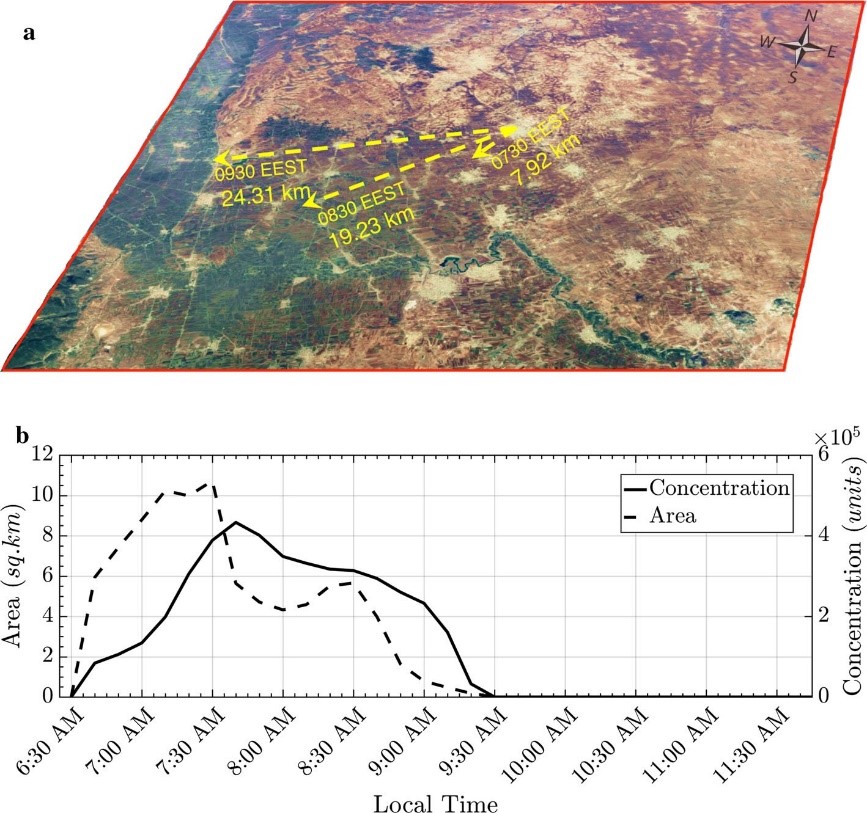

Chemicals and pollutants, such as vehicle exhaust, travel differently from other particulates in the atmosphere because they create their own microclimates called buoyant turbulence. The flow depends on the density of the material, how it mixes with the atmosphere and the time of day. Turbulence gradients are very sharp during the nighttime, early morning or if there are calm winds which means the chemicals travel faster. Figure 1 shows examples of chemical plume development.

Figure 1. Chemical plume development in time. Courtesy of Suddher Bhimireddy and Kiran Bhaganagar.

Buoyant air turbulence model used in the research

Turbulence is mathematically difficult to model and predict. The team’s computer model must first answer basic questions including “what is the source of the chemical or pollution?” and “what direction will it travel?”. The team looks at the physics of the turbulence event to break down all the elements such as wind direction, temperature, time of day, or season. This information comes from a variety of sources including local information, weather channels or from sensor data. Bhaganagar and Ph.D. candidate, Sudheer Bhimireddy, integrated their buoyant turbulence model with the high-resolution Advanced Research Weather Research and Forecast model to understand the role of atmospheric stability on the short-term transport of chemical plumes. The research was published in Atmospheric Pollution Research.

During the Syria air turbulence simulation, running a high-resolution model on the Stampede2 supercomputer took five full days of processing which is not feasible during an actual chemical dispersion emergency. To help solve this issue, Bhaganagar developed a coarser simulation model that uses a database of seasonal conditions as background information to speed up the calculations. The entire simulation time is brought down to four hours of processing. The team is researching how to cut processing time even further, so data is available to first responders as quickly as possible. For example, if data is available from sensors then the goal is to cut simulation time of an event down to 30 minutes. Having data from sensors is ideal and greatly increases the accuracy of the prediction up to 90 percent. Figure 2 shows examples of calculations from the Syria event.

Figure 2. a) Radial extent of the plume elements is 7.92 km from the source at 7.30 a. m., 19.23 km from the source at 8.30 a. m., and 24.3 km from the source at 9.30 a. m., b) ground coverage area in sq. km and (on secondary y-axis) ground concentrations versus time from initial release of the chemical. Courtesy of Suddher Bhimireddy and Kiran Bhaganagar

How air turbulence data is processed

The team breaks down data, such as wind direction, into small pieces. Then the team runs each specific element of data on a separate computer processor. During the initial research, data runs on the team’s cluster workstation with each processor solving a specific problem. The team uses up to 128 processors in the calculations which is like using 128 separate computers. Once all individual equation calculations are solved, final post-processing simulation is done on the TACC Stampede2 supercomputer, currently one of the most powerful and significant supercomputers in the U.S. for open science research. Stampede2 is an 18 petaflop system containing 4,200 Intel Xeon Phi nodes, and utilizes the Intel Xeon Scalable processor and Intel Omni-Path Architecture.

A supercomputer is required for final simulation because there can be up to one terabyte of data that must be processed—this cannot be completed on a standard cluster system. According to Bhaganagar, “I wouldn't have even attempted these research projects if I wasn't able to access TACC supercomputers. They're absolutely necessary for developing new turbulence models that can save lives in the future.”

Optimizing the buoyant turbulence code

Bhaganagar’s team created the software for the buoyant turbulence model, and optimized the software code so that it can effectively process data on a parallel-processing supercomputer. Masters and PhD students modified the code so that data can fit in a certain amount of RAM, as well as optimizing the code-level runtime, 3D matrices and verifying that algebraic equations are processed effectively.

Challenges for Future Research

“A goal of the Syria simulation was to determine if our simulation model could predict the radius of a chemical plume if the source was known. That simulation plume prediction closely matched the actual plume location which gave us confidence to continue our work in an effort to help save lives. In addition, we are doing research on how pollution gets dispersed in the atmosphere which can have broad scientific value.

However, we need to be able to do simulations in real-time to more quickly and accurately predict how chemical and pollution plumes move in the atmosphere. The ability to perform simulations of massive amounts of data cannot be accomplished only by software code or optimization. Additional innovation needs to occur at the hardware level with chip-manufacturers making modifications to processors and accelerators so that real-time processing is available to scientists,” concludes Bhaganagar.

###

Dr. Kiran Bhaganagar, Director of the laboratory of Turbulence, Sensing at University of Texas, San Antonio has been elected to the grade of Associate Fellow – Class of 2019 in the American Institute of Aeronautics and Astronautics (AIAA), https://www.aiaa.org/Class-of-2019-Associate-Fellows/

TACC Stampede2 supercomputer: https://www.tacc.utexas.edu/systems/stampede2

Linda Barney is the founder and owner of Barney and Associates, a technical/marketing writing, training and web design firm in Beaverton, OR.

This article was produced as part of Intel’s HPC editorial program, with the goal of highlighting cutting-edge science, research and innovation driven by the HPC community through advanced technology. The publisher of the content has final editing rights and determines what articles are published.