Revolutionizing Metabolomics Practices With Machine Learning

Complete the form below to unlock access to ALL audio articles.

Natural product drug discovery is driven by scientific and technological advances that help us visualize the rich analytical data we can generate. The rise of metabolomics allows us to simultaneously analyze multiple metabolites in a biological sample, a process which has been part of clinical research, pharmaceutical discovery and development for nearly two decades.

While these two approaches – targeted and untargeted metabolomics – offer many analytical benefits to the life sciences industry on their own, the integration of artificial intelligence and machine learning enables us to take this to the next level. We’ll explore how later on but first, let’s understand the difference between these two approaches.

Understanding metabolomics

Targeted metabolomics measures the landscape of small molecules, commonly known as metabolites, within living organisms. When scientists are interested in a very specific set of known metabolites, they can tune the protocols to detect this subset of the global metabolome – referring to small molecules and their interactions within a biological system.

Untargeted metabolomics refers to the identification and analysis of the entire global metabolome within a living organism. This process, which involves a more complicated set of experiments, presents more challenges, but has the potential to uncover rich datasets. These datasets can identify active compounds in bioactive extracts, discover new disease mechanisms, and characterize new ways in which drugs target specific diseases to create an interaction profile.

Advances in metabolomics and analytical chemistry provide the tools we need to pinpoint active compounds associated with bioactivity. The collected data can be aggregated and synthesized to provide a better understanding of the individual compounds responsible for targeting these diseases, but this process can be long and often discourages the industry from focusing efforts on natural product drug discovery.

Hurdles with identifying compounds from their mass spectra

Metabolomics is the study of all metabolites within an organism. Mass spectrometry produces a large amount of data for each sample. Combining these two methods with artificial intelligence and machine learning techniques makes these tools well-positioned to tackle larger data analysis problems. With enough data, we can train these platforms to screen various combinations of extracts and quickly determine which common structures are responsible for bioactivity. This cuts down on process time significantly and allows researchers to efficiently identify medicinally beneficial compounds that can then be turned into high-potential drug candidates.

Unlike DNA or the amino acids of proteins, metabolites are not composed of linear sequences of building blocks. As a result, researchers cannot take advantage of that inherent structure in the data or leverage sequencing to identify compound structures. Mass spectrometry is also difficult to use with metabolites, limiting the amount of metabolomic insight researchers can gain.

Another method that can be used is MS2 matching. This allows two similar spectra to be compared and measured. Scientists can construct vectors of likely matches for the individual mass peaks and then measure the cosine similarity of those vectors. They can then find close or exact matches in databases of known spectra to help identify the compounds or their families, an inference starting point for chemical structures of the molecule.

However, like most experimental data, the MS2 method does not always provide an exact match, which can make alignment difficult to find. Spectral reference libraries also have fairly low coverage, meaning that only a small percentage of the molecules of any given organism are described and registered in spectral databases.

Spectral similarity is also not identical to structural similarity, which means researchers have to often settle for the closest match. This is a problem because the smallest changes to molecules can result in dramatically different fragmentation patterns and thus dramatically different spectra. Failure to measure compound similarity makes it extremely hard to identify compounds, cluster compounds into families, and analyze structure-to-function relationships.

Turning to machine learning to create comprehensive mass spectra analyses: the right match

Mass spectra interpretation is best-suited for machine learning because the main processes – library matching, molecular networking, and molecule prediction – fundamentally rely on data representation. This makes deep learning a powerful tool for representing the complicated relationship between multiple data modes. Huber and colleagues recently demonstrated the power of machine learning to interpret spectra by creating the first Spec2vec model, which uses Word2vec to learn representations of mass peaks based on their co-occurrence in MS2 spectra across large reference databases. The method is unsupervised, based only on learning co-occurrence, but even without supervision, it creates a similarity measure that approximates structural similarity strikingly better than cosine similarity measures. However, there is still room for an even better approximation.

Similarity is not the only area for improvement in structural annotation via machine learning. Even where no close match exists in libraries, it is possible to infer certain properties and structural elements of molecules from their spectra. Structure and property inference is about pattern recognition. Domain experts can often gain enough expertise to “read” from mass spectra very specific differences in molecular structures. This suggests that information in the spectra may be enough to hypothesize the compound classes and structure of the molecules they represent.

Tools like CSI:FingerID and SubFragment-Matching have shown that machine learning algorithms can learn to predict useful properties and substructures of molecules from spectra alone. Combining predictive algorithms, powerful data encoding and predictions with molecular generation models, provides models that can better translate spectrum from an unknown compound directly into a predictive structure.

A model is only as good as its data. Public metabolomics reference databases like those in GNPS, MetaboLights, and Metabolomics Workbench are extremely useful, but large reference datasets are built around specific classes of interest and compound availability, not necessarily to maximize the information available to a machine learning algorithm. Optimizing for drug discovery program guidance would involve strategies like feeding massive amounts of data into machine learning algorithms.

The possibilities are endless for drug discovery with machine learning for metabolomics

Similarity and structure prediction is only the beginning stages of how machine learning might revolutionize metabolomics-focused drug discovery. As researchers continue to run extracts and mixtures through bioactivity assays, they can leverage the natural diversity of molecules to infer which chemical structures are responsible for desirable efficacy, toxicity, and pharmacokinetic properties, based only on the existing variability of natural molecules.

Being able to identify small structural changes in molecules can help us track metabolic changes to drugs as they pass through the body and identify reaction centers. Better dimensionality reduction methods and clustering will enable us to characterize large numbers of molecules in many plants at once and optimize sourcing for our compounds. Data extraction, neuro-linguistic programming, and knowledge graphs can also enable us to better understand a wealth of data around various molecule properties, connect molecules to cellular pathways, and prioritize lead generation, target ID, and production.

We are at a turning point where mass spectrometry equipment improvements and computational workflows have enabled large amounts of data to be collected and processed at once. Moreover, machine learning – particularly deep neural networks – enables the type of pattern recognition necessary to automate mass spectral interpretation. This opens up the possibility of scaling metabolomics to a degree that was previously out of reach.

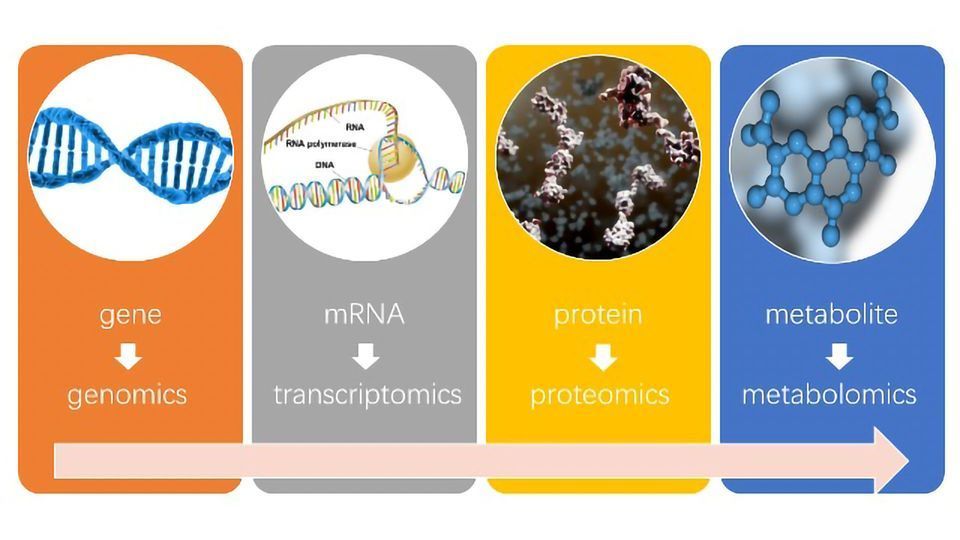

The technology is converging from the technical and computational sides, but machine learning for metabolomics is still a relatively small field compared to genomics, transcriptomics, or proteomics. The advances we make over the next 3-5 years will create an enormous opportunity for applied machine learning to revolutionize metabolomics and by extension the numerous industries that rely on it.

About the author:

David Healey is vice president of data science at Enveda Biosciences specializing in machine learning for life sciences and health care. He helped build the data science and machine learning programs at Recursion Pharmaceuticals. His expertise is in deep neural networks, computer vision, natural language, and graph models. David received his PhD in Biology at MIT's Center for the Physics of Living Systems.

Footnotes:

[1] Some terminology: untargeted metabolomics attempts to quantify all metabolites in a sample, as opposed to targeted metabolomics where there is a specific class or set of classes of molecules of interest to quantify. This article will use the term metabolomics as a shorthand for specifically the untargeted variety.

[2] Tandem mass-spectrometry is the most common way of measuring the metabolome, but there are other methods as well, including NMR.