The Markram Interviews Part One: An Introduction to Simulation Neuroscience

Complete the form below to unlock access to ALL audio articles.

In the first of our exclusive three-part interview series with Blue Brain Project Founder and Director Professor Henry Markram, we discuss the basics of simulation neuroscience. Markram, one of the leading proponents of the field, comments on why the idea of simulating the brain has raised concerns from experimental and theoretical neuroscientists and answers whether in silico techniques could ultimately replace experimental approaches. Find out more about our Markram Interviews series here.

Ruairi Mackenzie (RM): What is the main argument of experimental neuroscientists against simulation neuroscience?

Henry Markram (HM): Biologists are very aware of the daunting complexity of the brain at all its levels and of the endless phenomena it can give rise to when it is running or spluttering. Experimental neuroscientists experience directly what it is like to sit inside a labyrinth with infinite possible directions to the exit. It is therefore natural and completely understandable that the gut reaction is that it is impossible to build the brain mathematically from the ground up. We just do not know enough, is the claim. Agreed, but how much do we need to know before we can build it? That no one knows. The mist clears when one realizes that one does not have to measure everything in order to begin and that beginning can accelerate experimental neuroscience.

RM: What is the main argument of theoretical neuroscientists against simulation neuroscience?

HM: Theoretical neuroscience today can be seen as having the notion that one can only understand a complex system by simplifying it. Simplifying a complex phenomenon is seen as a process of squeezing out all the “unnecessary” detail until only the essence remains. This essence is regarded as the ultimate understanding.

Translated to biology, this viewpoint means that looking at how a mutation on one of the 20,000 odd genes used by the brain can change one’s preferences, talents and personality, or looking at how attaching a phosphate molecule onto a particular spot of one of the 100,000 odd types of proteins in a neuron can help us store memories or how when a neuron connects onto too many other neurons it may cause autism, is all unnecessary detail and irrelevant to understanding the brain.

It is difficult to understand this reaction to what I see as a wondrous universe of biological detail. On a scientific level, some of the voiced criticism points to a claim that one can never build an accurate model of the brain because there are just too many parameters. With so many parameters, they argue, one can never avoid overfitting the model and so the model will always be meaningless and is just a big waste of time. It is best to just build the simplest possible model where the parameters are fewer and hence the degrees of freedom are small enough to understand.

Ironically, theoreticians are absolutely right, but only for the kinds of models that are commonly built of the brain. If the parameters are so simplified that they cannot be constrained by biological data, then they are free parameters and you do have an overfitting problem. If they come from biological measurements, they are not free at all, they are constrained to a range of values that is found in the brain. The other factor that has not been taken into account is perhaps one of the most important and fundamental principle of all of biology, namely that every biological parameter in the brain is constrained by virtually every other parameter in the brain. The parameters can therefore not just assume any value, as every other parameter is holding it in place.

Let’s look at an example. Our body is made up of different kinds of cells. The way we build different cells is by expressing a different combination of the 20,000 odd genes that we inherit from our parents. In the brain, each type of neuron is built by expressing roughly half of these genes. Theoretically, the number of types of cells that can be built by the brain is the number of combinations of genes that we can express. Now it would take a very long time to even try to calculate how many combinations there are when one can chose 10,000 possible genes from a set of 20,000, but what is sure is that it is more than the number of sub-atomic particles in this, and probably every other universe we can imagine. That is the kind of degree of freedom problem one has with conceptual models.

Biology, on the other hand, is a minuscule subset of all theoretical possibilities. We get down to this minute subset of the theoretical possibilities by allowing everything in the brain to constrain everything else. This is the fundamental principle that has allowed the brain to evolve and that has allowed this complex organ to be so remarkably stable despite being ‘knocked around’ by life and disease.

RM: Why have you said that we cannot experimentally map the brain?

HM: Neuroscience is a very big, Big Data challenge. The human brain uses over 20,000 genes, more than 100,000 different proteins, more than a trillion molecules in a single cell, nearly 100 billion neurons, up to 1,000 trillion synapses and over 800 different brain regions to achieve its remarkable capabilities. Each of these elements has numerous rules of interaction. Not only must we treat understanding the brain as a Big Data challenge, but we also need the tools to understand how all these elements fit and work together. Mapping the brain is not the same kind of challenge as mapping the human genome because it requires mapping the brain across many levels of description, all the interactions between all the elements, and how they vary with development, experience, aging, strains, species, gender and a plethora of diseases. We will need to use informatics, data science and simulation to organize our data, fill vast gaps in our data, test our knowledge and make predictions of what we don’t yet know to produce maps of the brain under any circumstance.

RM: Can you give an example where it is not possible to obtain an experimental map, but where you can obtain this map by building a digital reconstruction?

HM: There are many examples. Here is one. A small piece of the neocortex, the size of a pinhead, has more around 55 types of neurons. They can each potentially form synapses with each other. Experiments would need to map 3,025 possible types of connections. This is a problem because it took all of neuroscience around 40 years to map around 20 of these types of connections, each one taking years and costing more than a million dollars. There are many efforts underway to experimentally map all the synapses involved in the connections and we still do not have such a map. We found four rules of how neurons connect with one another by analyzing a few of these 20 or so connections and developed an algorithm to connect neurons by following these rules. We then validated the algorithm by comparing the results against the connections not used to build the algorithm. We found that only around 2,000 of the 3,025 could actually form connections and could provide a complete predicted map of synapses for each of these connection types. We now have a prediction that there are around 40 million synapses connecting the neurons of a neocortical column of a rat brain. What this shows is that we can complement such an approach with experimental neuroscience to accelerate mapping of the brain.

RM: Do you think that in silico approaches can ultimately become the gold standard for research and replace in vivo techniques?

HM: Simulation neuroscience is a catalyst for experimental neuroscience, not a replacement. One can’t prove anything definitively in simulations. It provides predictions, some of which must eventually be tested with biological experimentation. As we get closer to reconciling and putting all the data and knowledge into a digital copy of the brain, simulation neuroscience may eventually become the first steps in any neuroscience study. The first steps for asking questions unbounded by technological limits, testing multiple hypotheses before starting expensive experiments, establishing the key biological experiments that are needed to definitely answer the question and doing the first exploratory studies to properly design these experiments. It is faster, easier and most importantly, it allows one to take all variables into consideration, which experiments just cannot do. Today many experiments on the brain are like taking a cup of water from the ocean and saying that there are no whales in the sea. Please do not get me wrong, I am not at all against experiments. I am an experimentalist myself and we need many, many more experiments.

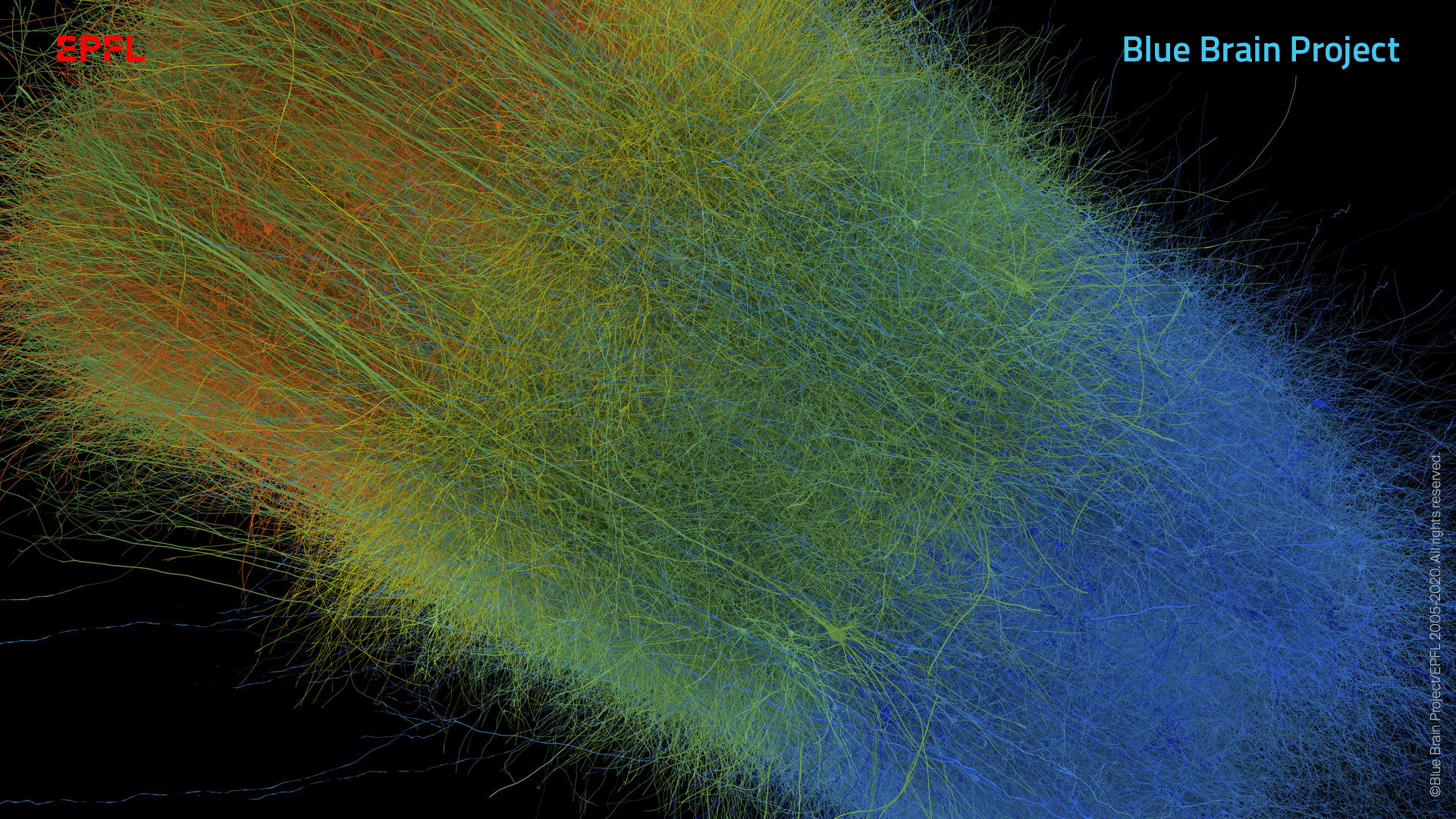

A digitally reconstructed neocortical column from the mouse cortex. Credit: Copyright © 2005-2020 Blue Brain Project/EPFL. All rights reserved.

Simulation neuroscience also allows us to go far beyond the limits of experimental neuroscience and make predictions that we don’t have the technology to even test and sometimes that we can’t even imagine. For example, in a recent paper we predicted that the brain lives in a sea of noise and chaos that quickly becomes organized and predictable when sensory input arrives. That discovery required manipulating the synaptic parameters in a way that is not technically possible today. In another study we could begin understanding what makes us the same and what makes us different by isolating how the neurons and the connections they form influence how they respond to sensory input. It may take a long time before experiments can provide definitive proof for some findings from simulation neuroscience, but that is not uncommon. For example, gravitational waves remained a prediction for a century until the technology was developed to test the theory. Such predictions, however, guide and accelerate our understanding, unbounded by technological limits, and will also guide technological development to allow us to eventually test these theories.

In some respects, simulation neuroscience is analogous to artificial intelligence (AI) outcompeting humans on many tasks simply because AI can look at so many more variables. Predicting aspects of the brain is of course a far more complex task than classifying cats or playing Go, but that is precisely why it is even more necessary. Computers can keep track of trillions of parameters and interactions every second, while humans can only keep track of a few. To me it is obvious that we need computers to help us understand the brain. I think simulation neuroscience will ultimately (but it may take a long time) disrupt experimental neuroscience in the same way that AI is disrupting what we thought were very human functions. The modern AI techniques that are sweeping the world are the automation of functions that are predictable. We see AI as intelligent and we may even fear this intelligence, but in truth, it is because we are shocked to discover that so many of our capabilities and behaviors are predictable and we are afraid that nothing we do is special. The novelty will subside and then we can get on to more interesting questions of intelligence. In the same way, simulation neuroscience is not something that needs to be feared, it is just necessary to reveal all that is predictable. What cannot be predicted will eventually become the most interesting question. However, we cannot answer this question amidst false illusions of what is predictable.

RM: How does a project to simulate the brain compare with other projects?

HM: It took 13 years for around 250 scientists to create the first draft map of the Human Genome Project at a cost of $3 billion. At the time, the project was controversial and when it was announced mapped, it was not complete, and people were saying that it did not keep its promises of bringing concrete health benefits. It took another decade or two before the benefits became obvious; the first gene therapies could be developed for cancers and today we can’t imagine any life science without it.

The Large Hadron Collider cost around $4.75 billion and took 10 years to build, not counting the many years of discussion and preparation. Besides discovering the Higgs boson, CERN projects generally also have collateral benefits such as discovering the internet and GPS systems – benefits many would say we could not live without now.

It took 11 years of a massive effort with around 400,000 people at its peak, and the sacrifice of human lives, to get to the moon at a cost of $153 billion in today’s terms. Many people asked, why do we need to go to the moon, don’t we have enough problems to be solved down here? But going to the moon took us beyond our limits, brought us countless new technologies and set the scene for space travel.

Estimates to get humans to Mars before 2050 ranges from around $200 billion to $1 trillion and many aspects remain undefined yet and are controversial.

How much effort and money should it take to build a digital copy of our brain that is a living library of how it is designed, how it works and all the ways it can go wrong in diseases? I do believe it is inevitable, but how long this will take to full maturity is difficult to say. I will consider it a success even if it ends up just opening the road for the next generation, like the first flight.